3Blue1Brown

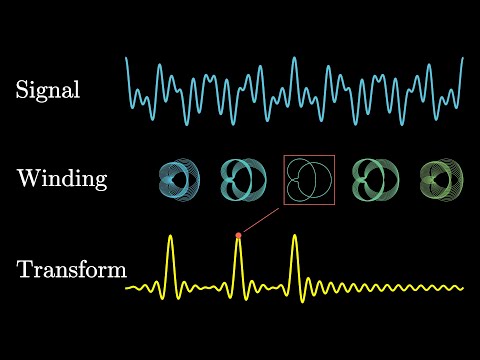

But what is the Fourier Transform? A visual introduction.

updated

Code for all the videos: github.com/3b1b/videos

Manim: github.com/3b1b/manim

Community edition: github.com/ManimCommunity/manim

Example scenes shown near the end: github.com/3b1b/manim/blob/master/example_scenes.py

I added some more details about the workflow shown in this video to the readme of the videos repo: github.com/3b1b/videos?tab=readme-ov-file#workflow

These lessons are funded directly by viewers: 3b1b.co/support

Timestamp:

0:00 - Intro

2:39 - Hello World

10:32 - Coding up a Lorenz attractor

23:46 - Add some tracking points

28:52 - The globals().update(locals()) hack

32:57 - Final styling on the scene

41:42 - Rending the scene

44:35 - Adding equations

48:43 - Where to start

SEV2: youtu.be/XEafCqcwBLs

------------------

These animations are largely made using a custom Python library, manim. See the FAQ comments here:

3b1b.co/faq#manim

github.com/3b1b/manim

github.com/ManimCommunity/manim

All code for specific videos is visible here:

github.com/3b1b/videos

The music is by Vincent Rubinetti.

vincentrubinetti.com

vincerubinetti.bandcamp.com/album/the-music-of-3blue1brown

open.spotify.com/album/1dVyjwS8FBqXhRunaG5W5u

------------------

3blue1brown is a channel about animating math, in all senses of the word animate. If you're reading the bottom of a video description, I'm guessing you're more interested than the average viewer in lessons here. It would mean a lot to me if you chose to stay up to date on new ones, either by subscribing here on YouTube or otherwise following on whichever platform below you check most regularly.

Mailing list: 3blue1brown.substack.com

Twitter: twitter.com/3blue1brown

Instagram: instagram.com/3blue1brown

Reddit: reddit.com/r/3blue1brown

Facebook: facebook.com/3blue1brown

Patreon: patreon.com/3blue1brown

Website: 3blue1brown.com

Instead of sponsored ad reads, these lessons are funded directly by viewers: 3b1b.co/support

An equally valuable form of support is to share the videos.

Hologram credits:

The Microscope is by Walter Spierings, 1984

Donations Hologram by Cherry Optical Holography

Lucy in a Tin Hat is by Patrick Keown Boyd, 1988

The Star Wars-themed Direct-Write Digital Holograms were produced by Zebra Imaging.

The 'Shakespeare' embossed animated integral hologram was made by Applied Holographics.

Walter Spierings, who did the microscope, is from Dutch Holographic Laboratory. He wanted me to let you know that anyone should feel free to approach them when it comes to producing holograms, they do a lot of innovative things with the medium: www.holoprint.com

Thanks to everyone who helped with this project:

Paul Dancstep, for help writing, and for all the 3d modeling

Craig Newswanger and Sally Weber, for making the central hologram shown

Kurt Bruns, for the artwork of Dennis Gabor

Phoebe Tooke, Wayne Grim, and Rick Danielson, for filming at the exploratorium

Quinn Brodsky and Mithuna Yoganathan, for footage of lasers through diffraction gratings

Vince Rubinetti, for writing the music

Cliff Stoll for the Klein Bottle

Small correction: After the algebra in the end, I say "We don't even make assumptions about R", but that's not quite true. To treat |R^2| as some scaling factor in the expression |R^2| * O, it matters that the amplitude of R is approximately constant around a given point.

Gabor's Nobel Prize lecture:

nobelprize.org/uploads/2018/06/gabor-lecture.pdf

A few resources we found helpful for this video

Seeing the Light, by Falk, Brill, and Stork

amzn.to/3Ngdiqh

Practical Holography, by Saxby and Zarcharovas

amzn.to/3ZR2MNN

Principles of Holography by Howard Smith

amzn.to/3ZOihFZ

Timestamps

0:00 - What is a Hologram?

3:28 - The recording process

11:45 - The simplest hologram

17:12 - Diffraction gratings

25:15 - Reconstructing the simplest hologram

28:24 - Conjugate image

31:11 - More complex scenes

35:58 - The bigger picture of holography

38:27 - The formal explanation

SEV1: youtu.be/iBYotKfYRQ0

------------------

These animations are largely made using a custom Python library, manim. See the FAQ comments here:

3b1b.co/faq#manim

github.com/3b1b/manim

github.com/ManimCommunity/manim

All code for specific videos is visible here:

github.com/3b1b/videos

The music is by Vincent Rubinetti.

vincentrubinetti.com

vincerubinetti.bandcamp.com/album/the-music-of-3blue1brown

open.spotify.com/album/1dVyjwS8FBqXhRunaG5W5u

------------------

3blue1brown is a channel about animating math, in all senses of the word animate. If you're reading the bottom of a video description, I'm guessing you're more interested than the average viewer in lessons here. It would mean a lot to me if you chose to stay up to date on new ones, either by subscribing here on YouTube or otherwise following on whichever platform below you check most regularly.

Mailing list: 3blue1brown.substack.com

Twitter: twitter.com/3blue1brown

Instagram: instagram.com/3blue1brown

Reddit: reddit.com/r/3blue1brown

Facebook: facebook.com/3blue1brown

Patreon: patreon.com/3blue1brown

Website: 3blue1brown.com

See Matt Parker's video for more:

youtu.be/ga9Qk38FaHM

The next short finishes the explanation: youtube.com/shorts/lpzUZDefha0

The next short explains why: youtube.com/shorts/sNWDjbaT208

See Matt Parker's video for more:

youtu.be/ga9Qk38FaHM

Instead of sponsored ad reads, these lessons are funded directly by viewers: 3b1b.co/support

An equally valuable form of support is to share the videos.

AI Alignment forum post from the Deepmind researchers referenced at the video's start:

alignmentforum.org/posts/iGuwZTHWb6DFY3sKB/fact-finding-attempting-to-reverse-engineer-factual-recall

Anthropic posts about superposition referenced near the end:

https://transformer-circuits.pub/2022/toy_model/index.html

https://transformer-circuits.pub/2023/monosemantic-features

Some added resources for those interested in learning more about mechanistic interpretability, offered by Neel Nanda

Mechanistic interpretability paper reading list

alignmentforum.org/posts/NfFST5Mio7BCAQHPA/an-extremely-opinionated-annotated-list-of-my-favourite

Getting started in mechanistic interpretability

neelnanda.io/mechanistic-interpretability/getting-started

An interactive demo of sparse autoencoders (made by Neuronpedia)

neuronpedia.org/gemma-scope#main

Coding tutorials for mechanistic interpretability (made by ARENA)

https://arena3-chapter1-transformer-interp.streamlit.app/

Sections:

0:00 - Where facts in LLMs live

2:15 - Quick refresher on transformers

4:39 - Assumptions for our toy example

6:07 - Inside a multilayer perceptron

15:38 - Counting parameters

17:04 - Superposition

21:37 - Up next

------------------

These animations are largely made using a custom Python library, manim. See the FAQ comments here:

3b1b.co/faq#manim

github.com/3b1b/manim

github.com/ManimCommunity/manim

All code for specific videos is visible here:

github.com/3b1b/videos

The music is by Vincent Rubinetti.

vincentrubinetti.com

vincerubinetti.bandcamp.com/album/the-music-of-3blue1brown

open.spotify.com/album/1dVyjwS8FBqXhRunaG5W5u

------------------

3blue1brown is a channel about animating math, in all senses of the word animate. If you're reading the bottom of a video description, I'm guessing you're more interested than the average viewer in lessons here. It would mean a lot to me if you chose to stay up to date on new ones, either by subscribing here on YouTube or otherwise following on whichever platform below you check most regularly.

Mailing list: 3blue1brown.substack.com

Twitter: twitter.com/3blue1brown

Instagram: instagram.com/3blue1brown

Reddit: reddit.com/r/3blue1brown

Facebook: facebook.com/3blue1brown

Patreon: patreon.com/3blue1brown

Website: 3blue1brown.com

Reposted here with permission from the University

Timestamps:

0:00 - End of Harriet Nembhard's introduction

0:45 - The cliché

2:28 - The shifting goal

5:57 - Action precedes motivation

7:02 - Timing

10:47 - Know your influence

12:05 - Anticipate change

------------------

3blue1brown is a channel about animating math, in all senses of the word animate. If you're reading the bottom of a video description, I'm guessing you're more interested than the average viewer in lessons here. It would mean a lot to me if you chose to stay up to date on new ones, either by subscribing here on YouTube or otherwise following on whichever platform below you check most regularly.

Mailing list: 3blue1brown.substack.com

Twitter: twitter.com/3blue1brown

Instagram: instagram.com/3blue1brown

Reddit: reddit.com/r/3blue1brown

Facebook: facebook.com/3blue1brown

Patreon: patreon.com/3blue1brown

Website: 3blue1brown.com

Instead of sponsored ad reads, these lessons are funded directly by viewers: 3b1b.co/support

Special thanks to these supporters: 3blue1brown.com/lessons/attention#thanks

An equally valuable form of support is to simply share the videos.

Demystifying self-attention, multiple heads, and cross-attention.

Instead of sponsored ad reads, these lessons are funded directly by viewers: 3b1b.co/support

The first pass for the translated subtitles here is machine-generated, and therefore notably imperfect. To contribute edits or fixes, visit translate.3blue1brown.com

And yes, at 22:00 (and elsewhere), "breaks" is a typo.

------------------

Here are a few other relevant resources

Build a GPT from scratch, by Andrej Karpathy

youtu.be/kCc8FmEb1nY

If you want a conceptual understanding of language models from the ground up, @vcubingx just started a short series of videos on the topic:

youtu.be/1il-s4mgNdI?si=XaVxj6bsdy3VkgEX

If you're interested in the herculean task of interpreting what these large networks might actually be doing, the Transformer Circuits posts by Anthropic are great. In particular, it was only after reading one of these that I started thinking of the combination of the value and output matrices as being a combined low-rank map from the embedding space to itself, which, at least in my mind, made things much clearer than other sources.

https://transformer-circuits.pub/2021/framework/index.html

Site with exercises related to ML programming and GPTs

gptandchill.ai/codingproblems

History of language models by Brit Cruise, @ArtOfTheProblem

youtu.be/OFS90-FX6pg

An early paper on how directions in embedding spaces have meaning:

arxiv.org/pdf/1301.3781.pdf

------------------

Timestamps:

0:00 - Recap on embeddings

1:39 - Motivating examples

4:29 - The attention pattern

11:08 - Masking

12:42 - Context size

13:10 - Values

15:44 - Counting parameters

18:21 - Cross-attention

19:19 - Multiple heads

22:16 - The output matrix

23:19 - Going deeper

24:54 - Ending

------------------

These animations are largely made using a custom Python library, manim. See the FAQ comments here:

3b1b.co/faq#manim

github.com/3b1b/manim

github.com/ManimCommunity/manim

All code for specific videos is visible here:

github.com/3b1b/videos

The music is by Vincent Rubinetti.

vincentrubinetti.com

vincerubinetti.bandcamp.com/album/the-music-of-3blue1brown

open.spotify.com/album/1dVyjwS8FBqXhRunaG5W5u

------------------

3blue1brown is a channel about animating math, in all senses of the word animate. If you're reading the bottom of a video description, I'm guessing you're more interested than the average viewer in lessons here. It would mean a lot to me if you chose to stay up to date on new ones, either by subscribing here on YouTube or otherwise following on whichever platform below you check most regularly.

Mailing list: 3blue1brown.substack.com

Twitter: twitter.com/3blue1brown

Instagram: instagram.com/3blue1brown

Reddit: reddit.com/r/3blue1brown

Facebook: facebook.com/3blue1brown

Patreon: patreon.com/3blue1brown

Website: 3blue1brown.com

Instead of sponsored ad reads, these lessons are funded directly by viewers: 3b1b.co/support

---

Here are a few other relevant resources

Build a GPT from scratch, by Andrej Karpathy

youtu.be/kCc8FmEb1nY

If you want a conceptual understanding of language models from the ground up, @vcubingx just started a short series of videos on the topic:

youtu.be/1il-s4mgNdI?si=XaVxj6bsdy3VkgEX

If you're interested in the herculean task of interpreting what these large networks might actually be doing, the Transformer Circuits posts by Anthropic are great. In particular, it was only after reading one of these that I started thinking of the combination of the value and output matrices as being a combined low-rank map from the embedding space to itself, which, at least in my mind, made things much clearer than other sources.

https://transformer-circuits.pub/2021/framework/index.html

Site with exercises related to ML programming and GPTs

gptandchill.ai/codingproblems

History of language models by Brit Cruise, @ArtOfTheProblem

youtu.be/OFS90-FX6pg

An early paper on how directions in embedding spaces have meaning:

arxiv.org/pdf/1301.3781.pdf

---

Timestamps

0:00 - Predict, sample, repeat

3:03 - Inside a transformer

6:36 - Chapter layout

7:20 - The premise of Deep Learning

12:27 - Word embeddings

18:25 - Embeddings beyond words

20:22 - Unembedding

22:22 - Softmax with temperature

26:03 - Up next

Or, for reference: youtu.be/aXRTczANuIs

Thanks to these viewers for their contributions to translations

Bulgarian: Martin Grozdanov

French: GiveMeChocolate, Yoyodotpy

German: Josh, dlatikay

Hebrew: Omer Tuchfeld

Hindi: rajeshwar-pandey

Spanish: Marcelo Lynch

Thanks to these viewers for their contributions to translations

French: GiveMeChocolate

Hindi: rajeshwar-pandey

Spanish: Yago Iglesias

Or, for reference: youtu.be/pQa_tWZmlGs

The full video this comes from proves why slicing a cone gives the same shape as the two-thumbtacks-and-string construction, which is beautiful.

Editing from long-form to short by Dawid Kołodziej

Or, for reference: youtu.be/HZGCoVF3YvM

Editing from long-form to short by Dawid Kołodziej

Or, for reference: youtu.be/lG4VkPoG3ko

Long-to-short editing by Dawid Kołodziej

Or, for reference: youtu.be/OkmNXy7er84

Editing from the original video into this short by Dawid Kołodziej

Or, for reference: youtu.be/Cz4Q4QOuoo8

That video answers various viewer questions about the index of refraction.

Editing from long-form to short by Dawid Kołodziej

Thanks to these viewers for their contributions to translations

Chinese: ZstringX

French: GiveMeChocolate, Yoyodotpy

German: Josh, dlatikay

Hindi: VaMErYT, rajeshwar-pandey

Korean: tebaioioo

Or, for reference: youtu.be/KTzGBJPuJwM

That video unpacks the mechanism behind how light slows down in passing through a medium, and why the slow-down rate would depend on color.

Editing from long-form to short by Dawid Kołodziej

Or, for reference: youtube.com/playlist?list=PLZHQObOWTQDPD3MizzM2xVFitgF8hE_ab

Editing from long-form to short by Dawid Kołodziej

Or, for reference: youtu.be/v68zYyaEmEA

That video describes using information theory to write a bot that plays Wordle

Editing from long-form to short by Dawid Kołodziej

Thanks to these viewers for their contributions to translations

French: PyStL

Spanish: Yago Iglesias

Or, for reference: youtu.be/mH0oCDa74tE

That video introduces group theory and the monster group.

Editing from long-form to short by Dawid Kołodziej

Or, for reference: youtu.be/NaL_Cb42WyY

That video explores how this question leads to a quandary on prime numbers, and how a pattern in primes allows for a clean final answer.

Editing from long-form to short by Dawid Kołodziej

Or, for reference: youtu.be/VYQVlVoWoPY

That video gives multiple examples of lying with visual proofs

Editing from the original video into this short by Dawid Kołodziej

Or, for reference: youtu.be/d-o3eB9sfls

(An active link is on the bottom of the video player)

Or, for reference: youtu.be/zeJD6dqJ5lo

Thanks to Dawid Kołodziej from long-to-short editing

Or, for reference: youtu.be/GNcFjFmqEc8

And this one: youtu.be/LqbZpur38nw

(Description links are not active in the shorts player, but you can follow the link at the bottom of the video screen itself)

Or, for reference: youtu.be/IaSGqQa5O-M

It describes convolutions in probability, extending to the continuous case

Editing from long-form to short by Dawid Kołodziej

Or, for reference: youtu.be/KuXjwB4LzSA

That video introduces convolutions, as used in image processing, probability, and signal processing.

Editing from long-form to short by Dawid Kołodziej

Or, for reference: youtu.be/851U557j6HE

These are known as Borwein integrals

Editing from long-form to short by Dawid Kołodziej

Or, for reference: youtu.be/yuVqxCSsE7c

A puzzle about stolen necklaces, from a video about the Borsuk Ulam theorem in topology

Editing from long-form to short by Dawid Kołodziej

Or, for reference: youtu.be/X8jsijhllIA

Editing from long-form to short by Dawid Kołodziej

3b1b on a meta-puzzle: youtu.be/wTJI_WuZSwE

(These description links aren't active in the shorts player, but you can follow the link on the bottom of the video screen itself)

This comes from a collaboration I did with Stand-up Maths, where on his channel we covered the solution, and here on 3blue1brown we analyze a meta-puzzle.

Editing from long-form to short by Dawid Kołodziej

This comes from a collaboration I did with Stand-up Maths, where on his channel we covered the solution, and here on 3blue1brown we analyze a meta-puzzle.

Editing from long-form to short by Dawid Kołodziej

Or, for reference: youtu.be/EK32jo7i5LQ

Thanks to Dawid Kołodziej for editing this short from the original.

Or, for reference: youtu.be/QCX62YJCmGk

Filming by Quinn Brodsky

Editing from long-form to short by Dawid Kołodziej

Or, for reference: youtu.be/r6sGWTCMz2k

That video tells the story of how this concept was originally invented to solve the heat equation.

Thanks to Dawid Kołodziej for editing together this short

Or, for reference: youtu.be/3s7h2MHQtxc

Editing from the original video into this short by Dawid Kołodziej

Or, for reference: youtu.be/KTzGBJPuJwM

There, I wanted to dig deeper to understand light slows down, and why this would depend on the color.

Or, for reference: youtu.be/jsYwFizhncE

Sliding blocks on a frictionless plane, counter their collisions, and...

Thanks to Dawid Kołodziej for editing together this short

Or, for reference: youtu.be/YtkIWDE36qU

Thanks to Dawid Kołodziej for editing together this short